The relentless push for more powerful and efficient artificial intelligence is increasingly focused on edge computing, where AI processing happens directly on devices rather than relying on distant cloud servers, and recent advancements in chip technology are significantly boosting on-device AI capabilities. This shift is driven by the need for faster response times, improved privacy, and reduced bandwidth consumption, especially for applications like autonomous vehicles, robotics, and advanced sensor networks. The development of specialized processors optimized for AI tasks is enabling a new generation of intelligent devices that can perform complex computations locally, unlocking possibilities previously limited by the constraints of cloud-based AI.

Contents

The Rise of Edge AI and Specialized Hardware

For years, AI workloads have been heavily reliant on cloud infrastructure, where powerful servers handle the computationally intensive tasks of training and inference. However, this centralized approach introduces latency and raises concerns about data security and privacy. Edge computing offers an alternative by bringing AI processing closer to the data source, directly onto devices like smartphones, cameras, and industrial equipment. This paradigm shift necessitates specialized hardware capable of efficiently executing AI algorithms in resource-constrained environments.

Key Benefits of Edge AI

- Reduced Latency: Processing data locally eliminates the round-trip time to the cloud, enabling real-time decision-making for critical applications.

- Enhanced Privacy: Sensitive data can be processed on-device without being transmitted to external servers, improving data security and user privacy.

- Lower Bandwidth Consumption: By performing AI tasks locally, the amount of data transmitted to the cloud is significantly reduced, lowering bandwidth costs and improving network efficiency.

- Increased Reliability: Edge devices can continue to operate even when disconnected from the internet, ensuring uninterrupted functionality in remote or unreliable network environments.

New Chip Architectures Driving On-Device Power

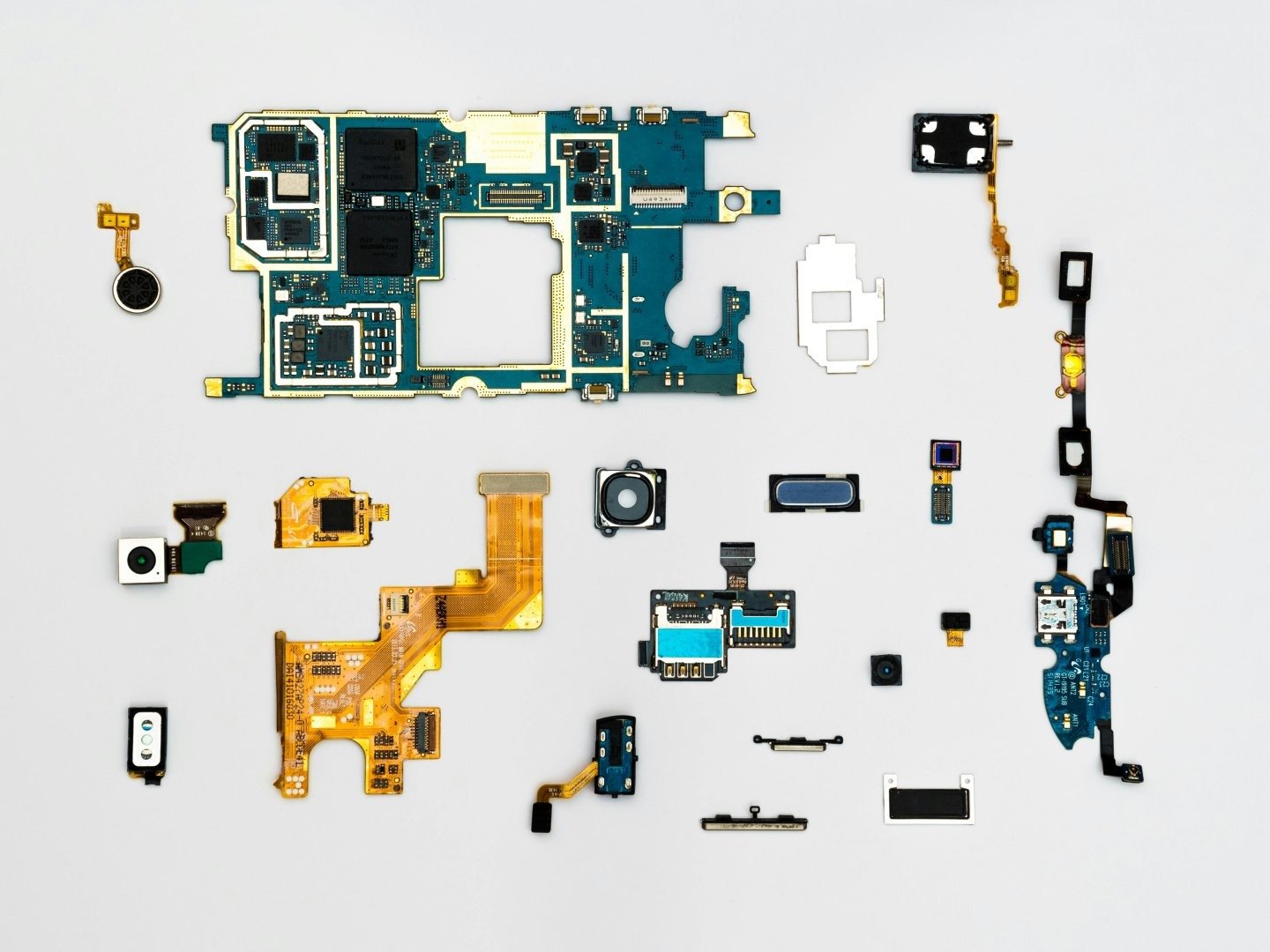

The demand for edge AI has spurred the development of innovative chip architectures specifically designed for AI workloads. These chips often incorporate specialized processing units, such as neural processing units (NPUs) and tensor processing units (TPUs), which are optimized for the matrix multiplications and other mathematical operations that are fundamental to deep learning algorithms. These architectures deliver significantly higher performance and energy efficiency compared to general-purpose CPUs and GPUs when executing AI tasks.

Examples of Edge AI Chips

Several companies are developing cutting-edge chips tailored for edge AI applications. While specific product details and future roadmaps are constantly evolving, the general trends highlight the focus on power efficiency and specialized processing capabilities.

- Mobile Processors: Smartphone manufacturers are integrating NPUs into their mobile processors to accelerate AI tasks like image recognition, natural language processing, and augmented reality.

- Dedicated AI Accelerators: Companies are creating dedicated AI accelerator chips that can be integrated into a wide range of devices, from industrial robots to smart cameras.

- FPGA-Based Solutions: Field-programmable gate arrays (FPGAs) offer a flexible platform for implementing custom AI accelerators, allowing developers to tailor the hardware to their specific application requirements.

How Edge AI is Reshaping Enterprise AI Strategy

The advancements in edge AI technology are prompting businesses to rethink their AI strategies. Companies are exploring how to leverage on-device AI to improve efficiency, reduce costs, and create new products and services. Edge AI enables real-time data analysis at the source, allowing businesses to make faster and more informed decisions. For example, manufacturers can use edge AI to monitor equipment performance and predict maintenance needs, while retailers can use it to personalize the shopping experience for customers. The integration of AI tools is becoming increasingly seamless, leading to a broader adoption across various industries.

Use Cases Across Industries

Edge AI is finding applications in a wide range of industries, including:

- Manufacturing: Predictive maintenance, quality control, and process optimization.

- Retail: Personalized shopping experiences, inventory management, and fraud detection.

- Healthcare: Remote patient monitoring, medical image analysis, and drug discovery.

- Automotive: Autonomous driving, driver assistance systems, and in-car entertainment.

- Smart Cities: Traffic management, public safety, and energy conservation.

What Edge AI Means for Developers and AI Tools

The rise of edge AI presents both opportunities and challenges for developers. On one hand, developers can now build AI-powered applications that are faster, more responsive, and more secure. On the other hand, they need to adapt to the constraints of resource-limited edge devices and learn how to optimize their algorithms for efficient execution. This has led to the development of new AI tools and frameworks specifically designed for edge deployment. Frameworks like TensorFlow Lite and PyTorch Mobile enable developers to deploy machine learning models on mobile and embedded devices. Furthermore, the emergence of a prompt generator tool tailored for edge devices can significantly streamline the development process.

Considerations for Edge AI Development

When developing AI applications for the edge, developers need to consider the following factors:

- Model Size and Complexity: Smaller and simpler models are generally better suited for edge devices with limited memory and processing power.

- Power Consumption: Edge devices often operate on battery power, so it’s crucial to minimize power consumption.

- Security: Edge devices are often deployed in insecure environments, so security is a paramount concern.

- Data Privacy: Protecting user data is essential, especially when processing sensitive information on-device.

Future Trends in Edge AI

The field of edge AI is rapidly evolving, and several key trends are expected to shape its future development. One trend is the increasing integration of AI capabilities into a wider range of devices, from household appliances to industrial machinery. Another trend is the development of more efficient and specialized AI chips that can deliver even higher performance at lower power consumption. Furthermore, we can anticipate advancements in federated learning, which enables AI models to be trained on decentralized data sources without compromising privacy. Resources like a comprehensive list of AI prompts will continue to be crucial for developers navigating this evolving landscape. Finally, the growing importance of edge AI is prompting regulators to consider new policies and guidelines to address issues such as data privacy and security.

The continuous development and refinement of *AI News Today | Edge AI News: New Chips Boost On-Device Power* are critical for enabling a future where intelligent devices can seamlessly interact with the world around us. This ongoing progress is not just about faster chips; it’s about creating a more decentralized, efficient, and privacy-preserving AI ecosystem. As the technology matures, we can expect to see even more innovative applications of edge AI emerge across various industries, transforming the way we live and work. Keep an eye on advancements in chip architecture, software optimization techniques, and regulatory frameworks to fully understand the potential of this transformative technology.